Contextual Computing: Why Your Next Favorite App Won’t Have an Interface

For the last twenty years, the “App Store” model has trained us to find an icon, tap it, and navigate a series of menus to get what we want. In 2026, this is increasingly seen as “digital friction.”

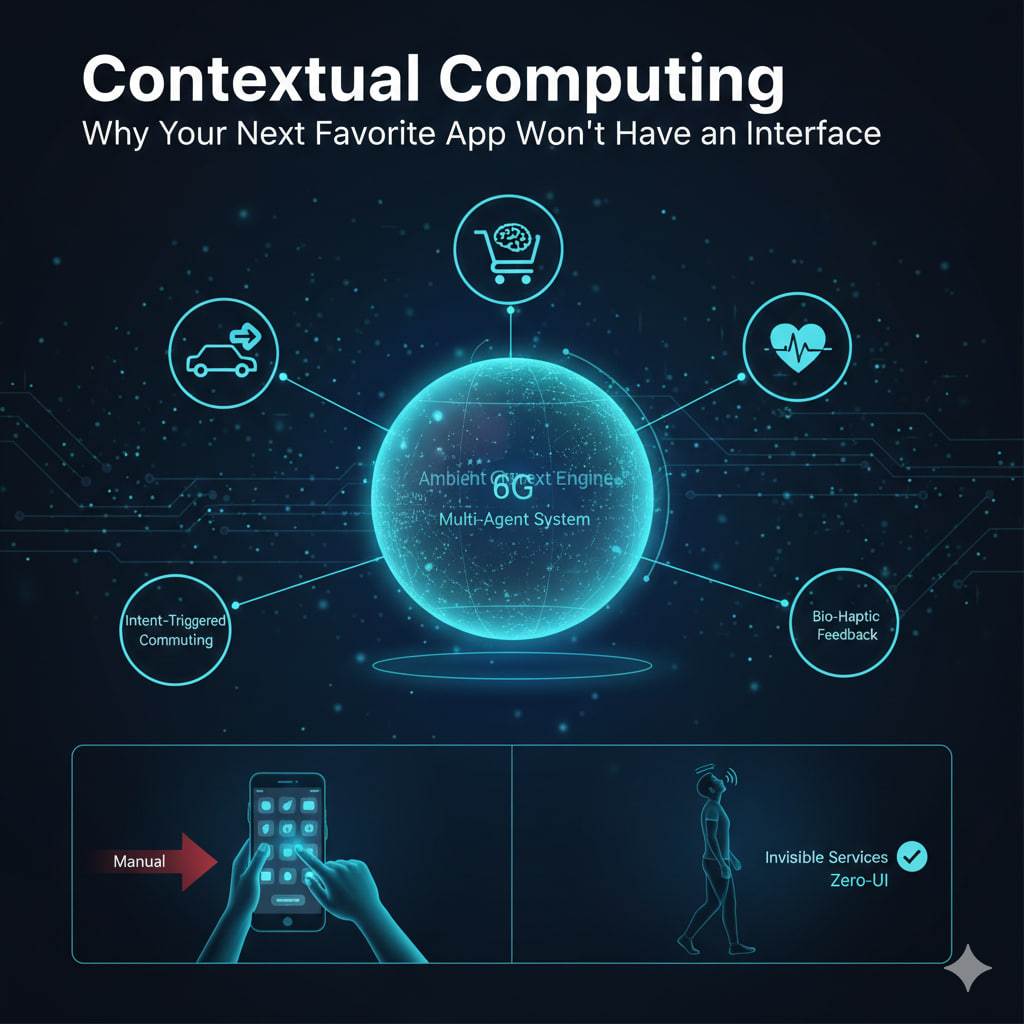

We are entering the era of Contextual Computing (or “Zero-UI”). The goal is no longer to build a better interface, but to make the interface disappear entirely. Your “apps” are becoming invisible services that live in the background, triggered by your location, biometrics, and intent.

The 2026 Engine: 6G and Multi-Agent Systems

Two technologies have finally made “invisible apps” reliable enough for daily use:

- 6G Spatial Intelligence: Unlike 5G, 6G uses Terahertz waves that act like a high-resolution “environmental radar.” Your devices now “see” your physical context—recognizing that you’ve sat at your desk, entered a grocery store, or laid down to sleep—without using invasive cameras.

- Agentic AI: Instead of a static app, you have “Agents” that communicate with each other. If your “Flight Agent” detects a delay, it automatically negotiates with your “Ride-Share Agent” and “Hotel Agent” to rebook your entire trip before you even check your phone.

From “User-Initiated” to “Intent-Triggered”

In 2026, the best user experience is the one that never requires a user to “experience” it.

| Activity | The 2022 Experience (Manual) | The 2026 Experience (Contextual) |

| Commuting | Open Maps → Type destination → Select route. | Your car/bike knows your 9 AM meeting is in a new building; it pre-loads the route and warms the seat as you walk toward it. |

| Grocery Shopping | Open App → Check list → Scan items. | Smart-Shelf Sensing: As you put items in your bag, they are added to a virtual cart. Your “Budget Agent” whispers a haptic pulse on your wrist if you’re over-spending. |

| Health & Wellness | Open Fitness App → Log workout → Log sleep. | Bio-Haptic Feedback: Your wearables detect rising cortisol levels and automatically dim the lights and play “Focus Audio” in your workspace. |

The “Invisible” Tech Stack

- Ear-worn AI (Hearables): The primary “interface” is now directional audio. Your apps “talk” to you only when necessary.

- Neural Wearables: Bracelets (like the Meta-RayBan integration) that read motor intentions from your wrist, allowing you to “click” or “scroll” in mid-air without a physical screen.

- Confidential Computing: To make this work, apps need to know everything about you. To prevent data leaks, 2026 apps run in “Secure Enclaves”—encrypted digital vaults where not even the app developer can see your raw data.

The Death of the “App Search”

In the Contextual Era, you don’t “browse” for apps. Apps are just-in-time services.

“In 2026, an app isn’t a destination; it’s a behavior.”

If you walk into a museum, the “Museum App” shouldn’t need to be downloaded. It should simply “attach” itself to your AR glasses or earbuds, providing narration based on exactly what you are looking at, then “detach” and delete its cache when you walk out the exit.

The Challenge: The “Clarity” Gap

The biggest hurdle for Contextual Computing is Trust. If things “just happen” in the background, users can feel a loss of agency.

- The Solution: 2026 design focuses on “Micro-Confirmations.” Instead of a full menu, you get a subtle vibration or a single word in your ear: “Rebooked?” A simple nod of your head (detected by your earbuds) confirms the action.

Leave a Reply